Creative systems evolve: success now means converting inspiration into real-world action through ethical design and measurable outcomes.

The Economic Barriers That Make Many Sites Invisible to AI Search Systems in 2026

In 2026, the internet looks automated, but the economics behind it are anything but simple. Most websites today rely on cloud infrastructure — AWS, Azure, Vercel — which promises flexibility, reliability, and “infinite” scale. What’s often overlooked is that these promises come with hidden costs, and those costs are influencing how sites behave, how bots are treated, and ultimately, who gets discovered in AI-driven search systems.

Bots used to be a minor technical nuisance; now, they are an economic factor.

Modern AI crawlers and scraping tools consume bandwidth and compute at unprecedented scale. Industry estimates suggest that bots account for up to 80 percent of web traffic, with AI-driven scrapers growing rapidly as organizations increasingly rely on automated data collection (DesignRush, 2024). Every bot request costs money. Bandwidth isn’t free, and cloud providers charge not just for storage and compute but also for data egress — the act of sending information out of their servers.

For sites hosting hundreds of gigabytes, a single aggressive AI crawl can produce surprise bills in the hundreds or thousands of dollars (B&H Proxy, 2024).

This creates a difficult choice for website operators. Allowing bots to index content is essential for visibility in AI search, but every extra request comes with a tangible financial cost. Many sites respond by blocking bots entirely. This decision is often less about hiding content and more about survival, as the economic reality forces organizations to protect their bottom line even at the cost of long-term discoverability.

The stakes are even higher because of the “elastic” nature of cloud infrastructure. Cloud providers advertise infinite scalability, but in practice, that elasticity translates directly into added costs. When a botnet or aggressive AI scraper hits a site, cloud services spin up extra virtual machines, CPUs, and memory to handle the load — each instance adding to the monthly bill. For smaller organizations, blocking bots becomes a necessary measure to prevent financial ruin (Check AI Bots, 2024).

Bot traffic also complicates analytics and reporting. Many organizations subscribe to marketing platforms that charge based on traffic volume, monthly active users, or events. When a significant portion of that traffic is automated, companies pay for “phantom” traffic that has no commercial value. This often leads to stricter bot-blocking policies, which protect budgets and clean analytics data but simultaneously cut off the machine-to-machine traffic that powers modern AI search systems (Botify, 2024).

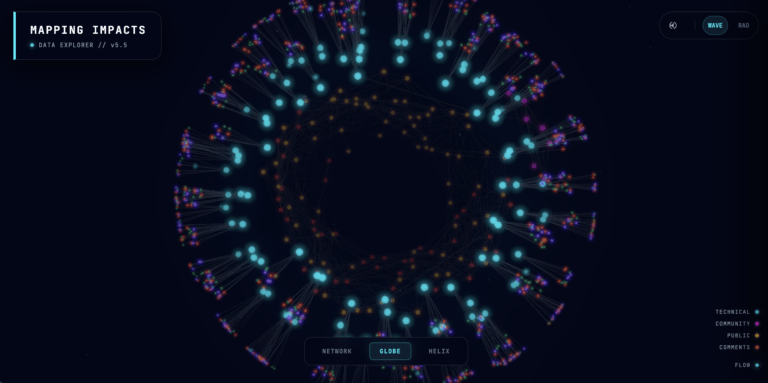

Research confirms this trend. Studies of robots.txt behavior and bot policies indicate that reputable sites are increasingly using bot-blocking rules to manage infrastructure costs, even though doing so may reduce their visibility in AI search and generative engines (Arxiv, 2025). This creates a paradox: cloud infrastructure enables massive scale, yet the costs of being discovered by AI incentivize self-limiting behavior.

This is exactly why we’ve chosen to avoid subscription-based cloud services. Rather than tying our growth to external billing and models of dependency, we’ve invested in building our own infrastructure from the ground up, learning and iterating as we go. By owning our servers and managing our resources directly, we remove the economic friction that forces so many sites to block bots. What others see as a liability — heavy bot traffic — we treat as an asset, allowing AI systems to fully index and understand our content without incurring surprise costs.

The result is clear. Sites most dependent on cloud economics often become invisible to AI. Those who invest in bare-metal infrastructure or otherwise decouple from per-request billing can allow bots to index freely. For them, bot traffic is not a liability but an asset. Enabling AI systems to map their content comprehensively, these organizations position themselves as authoritative nodes in the emerging AEO (Answer Engine Optimization) and GEO (Generative Engine Optimization) landscape.

In the AI-powered web, the choice to block or allow bots is no longer a technical decision alone — it is an economic and strategic decision that determines whether content will be seen, cited, and referenced in the years to come.