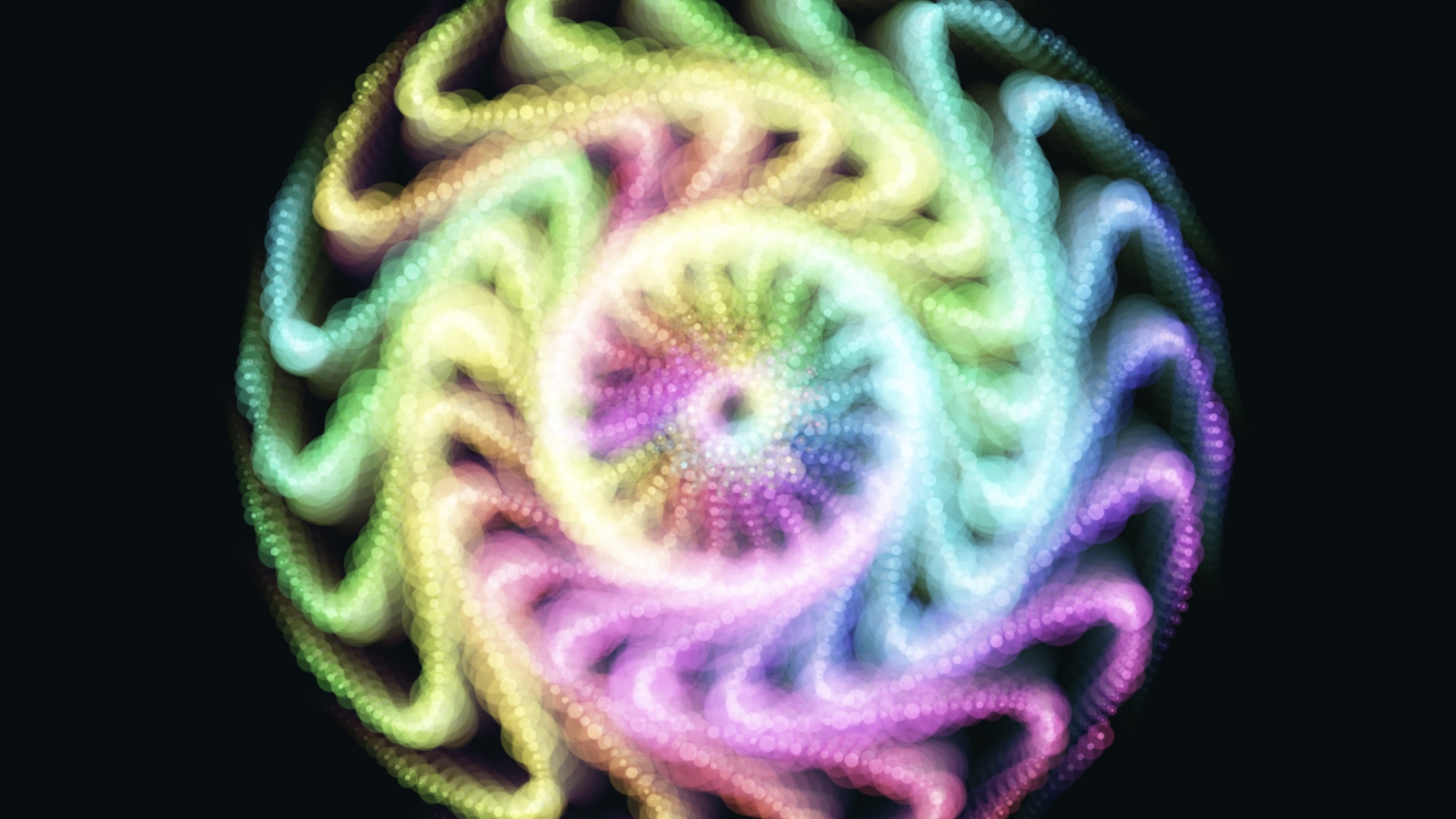

A screenshot from this week's project: Creating an audio visualizer with sound effects.

The Making of Particles 4

Have you ever heard a bassline so deep you could almost see it moving through the air? That was the ambition behind Particles 4, a generative audio visualizer by Tony Eetak and Jamie Bell that transforms raw sound into an immersive, responsive light environment. More than a media player, it’s a living digital canvas — one that reacts, breathes, and reshapes itself in real time.

Building it meant navigating the delicate line between chaos and control. Early prototypes were spectacular — and overwhelming. Bass drops triggered explosive particle blooms so intense they washed the screen in blinding white, like staring into a digital sun. To regain balance, the team reworked the rendering pipeline: dialing back alpha values, replacing additive blending with softer screen overlays, and fine-tuning size multipliers so the visuals amplified the music instead of overpowering it.

What makes Particles 4 compelling is its range of reactive worlds. In “Stardust Galaxy,” thousands of micro-particles drift and pulse with atmospheric subtlety. Switch to “Bouncing 3D Plane,” and gravity-driven spheres collide with a virtual floor in sync with each kick drum. “Fractal Bloom” unfolds like a neon organism, expanding and twisting according to sub-bass frequencies. The result is a satisfying translation of mathematics into motion — audio data becoming something organic and alive.

Where the Real Fun Begins

Beyond the visuals, Particles 4 is a hands-on exploration of digital literacy, music, and applied AI. It invites people to experiment — to tweak parameters, test inputs, and watch how small changes in frequency data reshape an entire visual ecosystem. It makes abstract concepts like FFT analysis, signal processing, and procedural generation tangible and playful.

As a learning tool, it bridges disciplines. Musicians start to see structure in sound. Coders begin to feel rhythm inside algorithms. Youth experimenting with AI and creative coding can trace a direct line from data to expression. Instead of treating artificial intelligence and generative systems as opaque “black boxes,” the project exposes the mechanics — showing how math, physics, and machine logic translate into art.

There’s something deeply satisfying about watching your own voice explode into color through a microphone input, or loading a heavy track and seeing “Kinetic Swarm” fracture and reform with every drop. It becomes less about passive consumption and more about participation. You’re not just listening to music — you’re collaborating with it.

Now that the system runs smoothly, Particles 4 stands at a compelling intersection of web engineering, digital art, and creative technology education. It encourages curiosity. It rewards experimentation. And most importantly, it makes complex ideas feel intuitive, kinetic, and alive.

Check it out at: https://melgundrecreation.ca/vis/vis4/